Worst Fit Allocation Algorithm

Worst Fit Allocation Algorithm

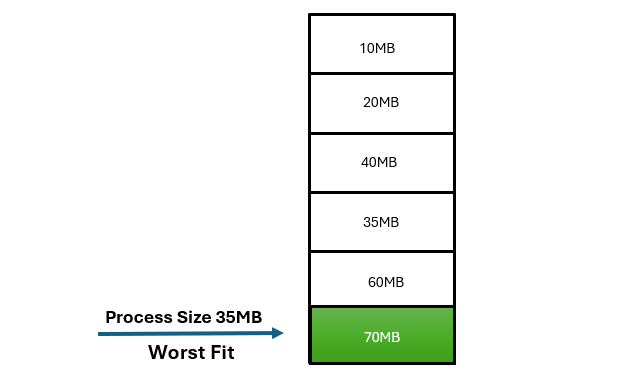

Worst Fit Allocation is a memory management approach where the largest available block of memory is allocated to a process. This strategy aims to minimize fragmentation by using the largest available space, even if it exceeds the size needed by the process.

| Process No. | Process Size | Block No. |

|---|

Frequently Ask Question

What is the Worst Fit Allocation Algorithm?

The Worst Fit Allocation Algorithm is a memory management strategy used in computer systems to allocate the largest available block of memory for a process. It aims to maximize the utilization of memory and, in turn, minimize the potential for fragmentation.

How does Worst Fit work?

Worst Fit searches the entire list of available memory blocks and selects the largest block that can accommodate the process’s memory requirements. This approach is contrary to Best Fit, which selects the smallest available block.

Why is it called “Worst Fit”?

The algorithm is named “Worst Fit” because it deliberately chooses the worst-fitting block – the largest one – for the incoming process. This helps in reducing the likelihood of larger free memory spaces being left after allocations.

What is the advantage of using Worst Fit?

The primary advantage of Worst Fit is that it can lead to more efficient memory usage by utilizing larger free memory blocks. This approach aims to minimize the creation of small free spaces, reducing external fragmentation.

Does Worst Fit always perform better than other algorithms?

While Worst Fit can be effective in certain scenarios, it may lead to increased fragmentation in dynamic environments where memory allocations and deallocations are frequent. It might not be the best choice for all types of workloads.

How does Worst Fit handle fragmentation?

Worst Fit minimizes external fragmentation by selecting larger memory blocks, reducing the likelihood of smaller free spaces being scattered throughout the memory. However, it may lead to internal fragmentation if the selected block is significantly larger than the process’s memory requirement.

Are there scenarios where Worst Fit may not be the best choice?

Yes, Worst Fit may not perform optimally in scenarios where there are frequent allocations and deallocations. The strategy of selecting the largest block may result in less efficient use of memory in dynamic environments.

Can Worst Fit lead to inefficiencies in memory usage?

In some cases, Worst Fit may allocate larger memory blocks than necessary, leading to internal fragmentation. This occurs when the selected block is much larger than the process’s actual memory requirement, resulting in wasted space.

When should I consider using Worst Fit?

Worst Fit can be a suitable choice when the goal is to minimize external fragmentation and efficiently utilize larger free memory blocks. It may be effective in scenarios where the size of the allocated memory is crucial to the application’s performance.

How can I implement Worst Fit in my system?

Implementing Worst Fit involves searching for the largest available block in the list of free memory blocks. The algorithm requires maintaining and updating a list of available memory blocks, sorted by size. Specific implementation details may vary based on the programming language and system architecture.